On subjectivity, meaning, and the limits of what can be replaced

AI will replace humans.

This statement appears today in many forms — as a promise, a warning, or a convenient shorthand for structuring the debate. On closer inspection, however, it turns out to be less controversial than imprecise. Replace which human — the one who processes information, or the one who experiences the world?

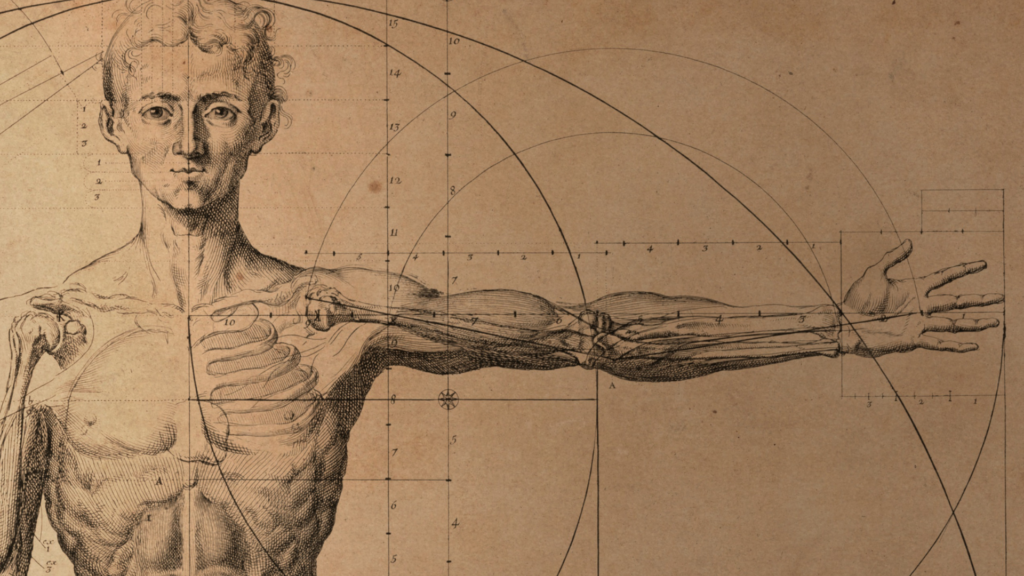

Contemporary AI systems achieve impressive results because they operate precisely where humans are easiest to model: at the level of function. Data analysis, pattern recognition, language generation — all of this can be modelled, scaled, and optimised. In this sense, AI does indeed approach what could be described as a functional equivalent of the brain, understood as a system for processing information.

The problem begins when we treat this level as a complete account of what a human being is. If thinking is reduced to information processing, understanding to prediction, and language to statistical generation, then humans become, by definition, replaceable. Not because machines have become human, but because humans have been described in a way that makes them equivalent to machines.

At the core of the issue lies a distinction we too easily take for granted: the brain and the mind are not the same, even though one cannot exist without the other. The brain can be described as a system of operations — impulses, signals, transformations — and it is precisely this operational level that we are increasingly able to replicate. The mind, however, is not a collection of operations. It is that within which operations become something for someone.

This shift — from process to experience — is not a difference of degree, but of kind. There is no obvious bridge between a system processing information and something meaningful emerging from it. One can imagine a system that functions perfectly at the level of the “brain” — biological or artificial — that may never become a mind — or not anytime soon. Not because it lacks something quantitatively, but because it lacks the dimension in which the world appears at all.

This difference becomes particularly visible where experience resists reduction. The song of a bird can be described as a pattern of frequencies, a sunset as a distribution of electromagnetic waves, a scent as a molecular configuration. Yet none of these representations is what actually happens when someone experiences them.

A tear that appears while watching an old recording is not a function of the image, but a return of time.

The sense of relief after making a difficult but just decision does not arise from optimising outcomes, but from restoring inner coherence. The feeling of safety in someone’s presence is not the absence of detected threats, but an immeasurable bond that makes the world feel less alien.

And finally — the metaphysical anxiety in the face of finitude is not an error in the survival instinct, but a direct encounter with one’s own limits.

A scent that evokes childhood is not merely a chemical stimulus, but a structure of memory that exists only because someone lives through it.

The same applies to the ethical dimension. Recognising suffering as a pattern is not the same as experiencing that someone’s suffering matters. For an algorithm, ethics is a set of boundary conditions — rules not to be violated in order for the system to remain “safe.” For the mind, ethics begins where the weight of responsibility appears.

In this sense, the mind does not merely assign meaning — it establishes obligation. When a person says “I love you,” “I’m sorry,” or “I choose,” they place their subjectivity at stake. Behind the word stands agency, and behind agency — the willingness to bear consequences. AI may generate the most beautiful apology imaginable, but it remains empty, because there is no “self” behind it that could feel shame, nor a life that could be altered by error. The difference between a system and a subject lies in this: a machine operates in a world without risk. AI can resolve a moral dilemma, but it cannot live through one.

Contemporary AI is not a subject. It has no point of view, no existential stake; it does not participate in the world, but operates on its representations. It can describe suffering, classify it, and generate an appropriate response — yet for it there is no difference between describing suffering and suffering itself, between simulating values and experiencing them. This is not a gap that can easily be closed by scale or complexity — it appears to belong to a different order altogether.

There is currently no basis for claiming that AI systems participate in this dimension — although the question of whether they ever might remains open. Perhaps, in a distant future, systems will emerge that cross this threshold. For now, however, everything suggests that we are operating entirely at the functional level.

In this light, the question “Will AI replace humans?” is poorly framed. What can be replaced are functions; what cannot be replaced is subjectivity. The paradox is that the more we are impressed by the capabilities of AI, the more readily we reduce humans to what is functional, measurable, and reproducible. And then the answer becomes obvious — not because machines have reached the level of humans, but because humans have been described in a way that makes them replaceable.

A “beautiful mind” is not defined by the ability to compute. It is defined by the capacity to assign meaning, to take a stance, and to bear the consequences of what one considers meaningful. This is the space in which the mind appears — and a space that, so far, no machine has entered.

In the age of AI, we need not only better models, but greater precision in understanding ourselves. Because if we lose the distinction between brain and mind, the real danger will not be AI.

It will be that we no longer know what it means to be human.